An Introduction to

OPC UA

What is OPC UA?

OPC UA is the next generation of OPC technology. OPC UA is a more secure, open, reliable mechanism for transferring information between Servers and Clients. It provides more open transports, better security and a more complete information model than the original OPC DA, “OPC Classic.” OPC UA provides a very flexible and adaptable mechanism for moving data between enterprise-type systems and the kinds of controls, monitoring devices and sensors that interact with real-world data.

OPC UA uses scalable platforms, multiple security models, multiple transport layers and a sophisticated information model to allow the smallest dedicated controller to freely interact with complex, high-end server applications. OPC UA can communicate anything from simple downtime status to massive amounts of highly complex plant-wide information.

Featured OPC UA Gateways

OPC UA is a sophisticated, scalable and flexible mechanism for establishing secure connections between Client and Servers. Feature of this unique technology include:

- Scalability – OPC UA is scalable and platform-independent. It can be supported on high-end Servers and on low-end sensors. UA uses discoverable profiles to include tiny embedded platforms as Servers in a UA system.

- A Flexible Address Space – The OPC UA Address Space is organized around the concept of an Object. Objects are entities that consist of Variables and Methods and provide a standard way for Servers to transfer information to Clients.

- Common Transports and Encodings – OPC UA uses standard transports and encodings to ensure that connectivity can be easily achieved in both embedded and enterprise environments.

- Security – OPC UA implements a sophisticated Security Model that ensures the authentication of Client and Servers, the authentication of users and the integrity of their communication.

- Internet Capability – OPC UA is fully capable of moving data over the Internet

- A Robust Set of Services – OPC UA provides a full suite of services for Eventing, Alarming, Reading, Writing, Discovery and more.

- Certified Interoperability – OPC UA certifies profiles such that connectivity between a Client and Server using a defined profile can be guaranteed.

- A Sophisticated Information Model – OPC UA profiles more than just an Object Model. OPC UA is designed to connect Objects in such a way that true Information can be shared between Clients and Servers.

- Sophisticated Alarming and Event Management – UA provides a highly configurable mechanism for providing alarms and event notifications to interested Clients. The Alarming and Event mechanisms go well beyond the standard change-in-value type alarming found in most protocols.

- Integration with Standard Industry-Specific Data Models – The OPC Foundation is working with several industry trade groups that define specific Information Models for their industries to support those Information Models within UA.

The History Behind OPC UA

Around 1994-1995, a bunch of factory automation experts got together and decided there had to be a better way to operate their factory floor applications. Their work eventually led to the OPC 1.0 and the creation of the OPC Foundation. Their mission was simple: create a way for applications to get at data inside an automation device without having to know anything about how that device works.

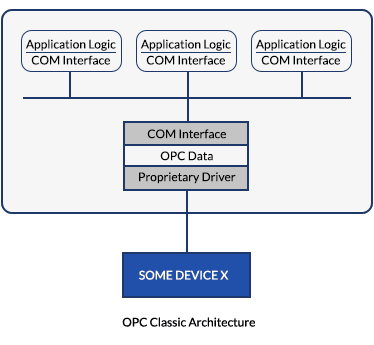

Their solution centered on COM (Component Object Model). COM was a newer Microsoft technology at the time. It provided a way for Microsoft Windows applications to share data. One application could request data, and another would supply it. The OPC founding fathers took this technology mated it to an API that supported device protocols for automation devices, and OPC 1.0 was born. Data from the device, no matter what physical layer or protocol it supported, could be read or written by any Microsoft application that supported COM.

This was revolutionary and probably accelerated the adoption of PCs on the factory floor. For the first time ever, systems integrators and other factory floor application developers could implement applications without spending vast sums on driver development.

However, not long after its invention, the application demands, and a changing automation landscape began to erode the acceptability of PCs and OPC Servers in these applications. Why? COM and DCOM (distributed version of COM) are difficult to maintain and understand without significant training. How you use it, configure it and set up authorizations varies slightly from one version of Microsoft Windows to the next.

The lack of COM/DCOM knowledge and the seemingly inconsequential act of plugging in a data stick are not OPC Classic problems. The problem is that management didn’t dictate that a checklist is in place when an OPC Server stops communicating. Management didn’t have a certification program in place to ensure that the people maintaining OPC Servers were well trained in COM and DCOM and troubleshooting OPC Server problems.

The other problem is the deficiencies that come with dependency on Microsoft and Windows. COM, the base technology for OPC, is a Microsoft product. It runs only on Microsoft platforms. Not Linux, not VxWorks, not anything else.

Microsoft has a well-deserved negative reputation, especially in Industrial Automation. In this industry, we generally build automation processes to last. There are a few products that are short-lived, but it’s much more common to build production processes for diapers, soap, tea and hundreds of other products that we’re going to run for the next five, ten or twenty years. Microsoft products and PCs aren’t suited for that kind of environment with how quickly they become obsolete.

The real problem with the original version of OPC, what is now sometimes called OPC Classic, is that this is an expensive and inefficient way to get data from a device (RFID reader) into that database. There’s a PC involved – someone must set it up, maintain it, validate that it is secure, etc. There are the hardware setup and labor and the ongoing labor to maintain it.

But more than that, it’s very inefficient and provides incomplete data. The data must be carefully managed all the way from the RFID reader to the Server to make sure that the different systems use the correct data types, that resolution is maintained, the endian order (which byte is first) is proper for that system.

Lastly, OPC Classic was vulnerable. Microsoft platforms that support COM and DCOM are vulnerable to sophisticated attacks from all sorts of viruses and malware.

Even though OPC Classic was wildly successful and worked well when managed right, there was enough dissatisfaction with the security issues, platform issues and data inconsistencies that a successor was planned for it. That successor is OPC UA (Universal Access).

What OPC UA adds as a new communication architecture?

- Platform dependence– OPC UA no longer has a reliance on Microsoft DCOM. UA is RTOS independent and can be implemented on Linux.

- Robust data models– OPC UA offers a highly flexible and robust information model. It also established relationships between data items and systems that are important in today’s connected world

- Security– OPC UA offers endpoint authentications, encryption and removes the reliance on DCOM OPC DA had.

Why Use OPC UA?

OPC UA is the first communication technology built specifically to live in that “no man’s land” where data must traverse firewalls, specialized platforms, and security barriers to arrive at a place where that data can be turned into information. Most other technologies are designed for a specific application. OPC UA is more flexible in that it provides connectivity advantages for all levels of the automation architecture.

OPC UA is designed to be well-suited for connecting the factory floor to the enterprise. Servers can support the transports used in many traditional IT type applications. Servers can connect with these IT applications using SOAP (Simple Object Access Protocol) or HTTP (Hypertext Transfer Protocol). HTTP is, in fact, the foundation of the data communication used by the World Wide Web.

A key differentiator for OPC UA is that the mapping to the transport layer is totally independent of the OPC UA services, messaging, information and object models. That way, if additional transports are defined in the future, the same OPC UA Information Model, object model, and messaging services can be applied to that new transport. OPC UA truly is future proof.

OPC UA Transport Layers

Many of the common Industrial Automation (IA) protocol technologies limit the available transports. Devices that want to communicate with Programmable Controllers must use the transport that is defined for the communication technology supported by that brand of Programmable controller (PLC). However, the transports of OPC UA are not limited like other protocols. The technology space where OPC UA operates is much more extensive and requires support for many different transports and the capability to add new transports in the future. Transports are about how an OPC UA message is moved from your node to some other node on the network.

Once an OPC UA application forms a UA message or a response, it must send it somewhere. Transports are the low-level mechanisms for moving those serialized messages from one place to another.

OPC UA operates in very broad technology space and the devices can support multiple transports or even custom or proprietary transports. OPC UA devices can be anything from a factory floor sensor or actuator to a Programmable Controller, a Human Interface Device, a Windows Server operating a massive Oracle database, or an undersea pipeline controller. A rich set of support transports are required to support the OPC UA mission of being a completely scalable solution.

The OPC UA specification defines several transports that Clients must support:

- SOAP / HTTP TRANSPORTS – HTTP (HyperText Transfer Protocol) is the connectionless, stateless, request-response protocol that you use every time you access a web page. SOAP (Simple Object Access Protocol) is an XML messaging protocol that provides a mechanism for applications to encode messages to other applications.

- HTTPS TRANSPORT – Hyper Text Transfer Protocol Secure (HTTPS) is the secure version of HTTP. It means that all communications between your browser and that website are encrypted. Just as with HTTP, SOAP is used as the request-response protocol to move the OPC UA requests between Clients and Servers.

- UA TCP TRANSPORT – UA TCP is a simple TCP-based protocol designed for Server devices lacking the resources to implement XML encoding and HTTP/SOAP type transports. UA TCP uses binary encoding and a simple message structure that can be implemented on low-end Servers.

What makes OPC UA transports so powerful is that OPC UA requests to Read or Write an Attribute can use standard Web Services technologies. UA requests and responses can be encoded as XML, placed inside a SOAP request, and transferred to an IT application that already knows how to handle the HTTP, the SOAP message, and the XML. OPC UA has an essentially “free” mechanism for sending messages between the factory floor and IT devices.

OPC UA Data Model

It all starts with an object. An object that could be as simple as a single piece of data or as sophisticated as a process, a system or an entire plant.

It might be a combination of data values, meta-data, and relationships. Take a dual loop controller. The dual loop controller object would relate variables for the setpoints and actual values for each loop. Those variables would reference other variables that contain meta-data like the temperature units, high and low setpoints, and text descriptions.

The object might also make available subscriptions to get notifications on changes to the data values or the meta-data for that data value. A client accessing that one object can get as little data as it wants (single data value) or an extremely rich set of information that describes that controller and its operation in detail.

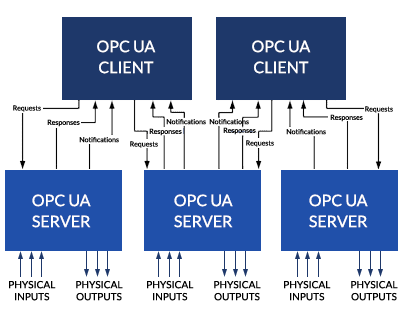

OPC UA is, like its factory floor cousins, composed of a client and a server. The client device requests information. The server device provides it. But as we can see from the loop controller example, what the OPC UA server does is much more sophisticated than what an EtherNet/IP, Modbus TCP or PROFINET IO server does.

An OPC UA server models data, information, processes, and systems as objects and presents those objects to clients in ways that are useful to vastly different types of client applications. Better yet, the OPC UA server provides sophisticated services that the client can use, including:

OPC UA Services

- Discovery Services –Services that clients can use to know what objects are available, how they are linked to other objects, what kind of data and what type is available, and what meta-data is available that can be used to organize, classify and describe those objects and values

- Subscription Services –Services that the clients can use to identify what kind of data is available for notifications. Services that clients can use to decide how little, how much and when they wish to be notified about changes, not only to data values but to the meta-data and structure of objects

- Query Services –Services that deliver bulk data to a client, like historical data for a data value

- Node Services –Services that clients can use to create, delete and modify the structure of the data maintained by the server

- Method Services –Services that the clients can use to make function calls associated with objects

OPC UA server is a data engine that gathers information and presents it in ways that are useful to various types of OPC UA client devices, devices that could be located on the factory floor like an HMI, a proprietary control program like a recipe manager, or a database, dashboard or sophisticated analytics program that might be located on an enterprise server.

Data is not necessarily limited to a single physical node. Objects can reference other objects, data variables, data types and more that exist in nodes off someplace else in the subnet or someplace else in the architecture or even someplace else on the Internet.

OPC UA Device Types

The OPC UA Server

All industrial Servers provide the physical interface to the real world. Servers measure physical properties, indicate status, initiate physical actions and do all sorts of physical measurements and activations in the real world under the direction of a remote Client device. Servers are where the physical world meets the digital world.

The specific capabilities of an OPC UA Server are described by the Profile it supports. A Profile indicates to other devices (electronically) and to people (human-readable form) what specific features of the OPC UA specification are supported. Engineers can determine from the Profile if this device is suitable for an application. A Client device can interrogate the Server and determine if it is compatible with the Client and its application and if it should initiate the connection process with the device.

An OPC UA Server announces its availability to interested Client devices, it provides a list of its capabilities and functionality to interested Clients, it provides notifications of different kinds of events, it executes small pieces of logic called methods, it provides address space information in bulk to Clients (Query service), it provides browsing services so that a Client can walk through its address space, and it can allow Clients to modify the node structure of its address space.

An OPC UA Server models data, information, processes, and systems as Object and presents those Objects to Clients in ways that are useful to vastly different types of Client applications. Better yet, the UA Server provides sophisticated services that the Client can use including:

- Discovery Services – services that Clients can use to know what Objects are available, how they are linked to other Objects, what kind of data and what type is available, what meta-data is available that can be used to organize, classify and describe those Objects and Values

- Subscription services – services that the Clients can use to identify what kind of data is available for notifications. Services that Clients can use to decide how little, how much and when they wish to be notified about changes, not only to data values but to the meta-data and structure of Objects

- Query Services – services that deliver bulk data to a Client like historical data for a data value

- Node Services – services that Clients can use to create, delete and modify the structure of the data maintained by the Server

- Method Services – services that the Clients can use to make function calls associated with Objects

Unlike the standard industrial protocols, an OPC UA Server is a data engine that gathers information and presents it in ways that are useful to various types of OPC UA Client devices. Devices that could be located on the factory floor like an HMI, a proprietary control program like a recipe manager or a database, dashboard or sophisticated analytics program that might be located on an Enterprise Server.

The OPC UA Client

In most industrial networking technologies, there is a controlling device: a device that connects to and controls one or more end devices. In OPC UA, a device of this type is known as an OPC UA Client. Like controlling devices in these other technologies, an OPC UA Client device sends message packets to Server devices and receives responses from its Server devices. But beyond this basic functionality, an OPC UA Client device is fundamentally more sophisticated than controllers in other technologies.

There are eight concepts that are important to remember when thinking about OPC UA Clients:

- Client devices request services from OPC UA Server devices. Server devices send response messages and notifications to the OPC UA Client device.

- The Subscription Service Set, which drives notifications, and the Read Service of the Attribute Service Set are the primary services that OPC UA Clients use to interact with the address space on an OPC UA Server.

- Clients find OPC UA Server devices in multiple ways. Clients can find Servers using traditional configuration, by using a Local Discovery Server, by using a Local Discovery Server with a Multicast Extension, or by using a Global Discovery Server.

- Once a Client finds a Server, it obtains the list of available endpoints and selects an endpoint that supports the security profile and transport that matches its application requirements.

- Clients begin the process of accessing an OPC UA Server by creating a channel, a long term, a connection between it and an OPC UA Server. Channels are the authenticated connections between two devices.

- Once the channel is established, Clients create sessions, long term, logical connections between OPC UA applications. A session is the authorized connection between the Client’s application and the Server’s address space.

- Clients can subscribe to data value changes, alarm conditions, and any results from programs executed by Servers. Servers publish notifications back to the Client when those items are triggered.

- Clients invoke methods, which are small program segments. Programs can return results to the Client in the Method call or in a Notification if the Client subscribes to it.

OPC UA Security

When a request is received to connect to a server, the server validates the request, decrypts it and validates the certificate. Once a valid request for authentication or authorization is received and decoded, the next step is to determine if the server should accept the connection from that device or allow access to that user. It’s very important to realize that the OPC UA software stack does not do that. It passes that to the application to make the final determination of the request.

The OPC UA specification says nothing about how an application validates an authentication or authorization request. It is entirely up to the application as to how it should determine what devices can connect to it and what users can access what resources.

OPC UA disconnects its request-response messaging protocol from the serialization, security, and transports. An OPC message, like the Read Service, reads the value of an attribute and can be serialized in multiple encodings (binary or XML). It can also be secured with one or more security protocols (OPC UA Secure Conversation or WS Secure Conversation) and be transported with one or more transports (UA TCP, HTTPS or SOAP/HTTP). In most systems, there is no such division between these functions.

OPC UA makes a distinction between Client applications and users. A Server may authorize a connection with a Client application and create a communication channel, while not authorizing a connection with a user of that Client application. Applications and users are authenticated and authorized separately.

OPC UA is designed to counter threats most likely to occur in your manufacturing plant, specific to various types of operating equipment with varying amounts of resources and processing power. Threats like message flooding, unauthorized disclosure of message data, message spoofing, message alteration, message replay, and other attacks are countered by the security procedures built into OPC UA.

OPC UA Certification

When you submit a device, like an OPC UA Server device, for testing at an OPC UA Foundation test facility, you are not only getting the most rigorous evaluation of your device, but you are getting an evaluation of how well it works with other devices in a sophisticated network. That’s more than you can do on your own unless you are a global megacorporation.

In the case of the OPC UA Foundation tests, there is a specific process that you must follow:

- Complete your internal testing and validate that your device performs as you expect it to perform. You should have completed both your functional and stress testing before submitting it for an OPC UA certification test.

- Use the online OPC Foundation Registration Wizard to register for your test.

- Receive the notice from the foundation regarding your test date and ship your device to the test site. You may wish to accompany your device but that is not required.

- Wait for the test to be conducted.

- Receive the list of errors, or, if you pass all tests, receive the Certification statement that your device is certified.

- You will also receive an x.509 certificate that can be stored in your device and made available to Client devices that request it.

OPC UA in Review

OPC UA is an architecture that systematizes how to model data, model systems, model machines and model entire plants. You can model anything in OPC UA. OPC UA is a systems architecture that promotes interoperability between all types and manner of systems in various kinds of applications.

It is a technology that allows users to customize how data is organized and how information about that data is reported. Notifications on user-selected events can be made on criteria chosen by the user, including by-exception. It is a scalable technology that can be deployed on small embedded devices and larger Servers. It functions as well in an IT database application as on a recipe management system, on a factory floor, or a maintenance application in a Building Automation System.

It is a technology that provides layers of security that include authorization, authentication, and auditing. The security level can be chosen at runtime and tailored to the needs of the application or plant environment.

This isn’t to say that OPC UA is “better” than EtherNet/IP, ProfiNet IO or Modbus TCP. For moving IO data around a machine, these protocols provide the right combination of transports, functionality, and simplicity that enable machine control with a networked I/O. They are very good technologies for the machine control level of the automation hierarchy.

In the end, OPC UA increases productivity, enhances quality, and lowers costs by providing not only more data, but also information, and the right kind of information to the production, maintenance, and IT systems that need that information when they need it. That’s the stuff that EtherNet/IP ProfiNet IO, Modbus TCP and all the rest just can’t do.